At Themeisle, we believe in hands-on testing of every product we recommend. Our reviews are based on real experiences (real testing, real time, and money invested), not just specifications or marketing materials. Here’s how we ensure our recommendations are trustworthy and valuable to you.

Our testing philosophy

We approach product testing with three key principles:

- Real-world usage: We test products in environments that mirror how you would actually use them.

- Consistent methodology: Each product category follows specific testing processes to ensure fair comparisons.

- User-focused evaluation: We prioritize aspects that matter most to WordPress users like you.

We believe that the most accurate product evaluations come from people with relevant expertise. That’s why we employ subject matter experts for different product categories.

Each expert brings specialized experience to their testing process, ensuring you get insights that go beyond surface-level observations. Our testers don’t just know how to use these products; they understand how they’re built, what makes them work well, and how to identify potential issues that might impact your site.

Our general testing process

Products we review generally go through these steps:

- Setup and installation: We purchase, install and configure the product ourselves, noting the ease of the process.

- Feature verification: We test every significant feature to ensure it works as advertised.

- Performance assessment: We measure how the product affects site speed and resource usage.

- Compatibility testing: We check compatibility with popular themes and plugins if applicable.

- Support evaluation: We contact customer support to assess response time and quality.

- Value assessment: We compare pricing against features and performance to determine overall value.

This is an ideal scenario. Not all products can be tested using identical steps. We adapt our methodology appropriately for each product category.

Isn’t this super expensive?

Yes, yes it is!

Maintaining all our hosting test accounts, purchasing various plugins, renewing premium licenses, and employing expert testers requires a significant investment (we’ve got invoices to prove it).

Just to give you an idea, we spend hundreds of dollars monthly on hosting accounts alone to ensure our recommendations are based on current experiences.

This is why we must support our website in other ways. The Themeisle blog is primarily funded through affiliate links – that thing where if you click on something and then purchase the product or service, we earn a small commission. Learn more about how we make money and why we chose to do it this way here.

However, we want to be crystal clear: our testing process is in no way influenced by these affiliate relationships. Our editorial staff operates completely independently from our affiliate partnerships team. Our reviewers don’t know how a particular article is monetized and don’t receive any portion of the commissions earned. This strict separation ensures that our recommendations are based solely on the quality of the products and their value to users like you.

We’d rather tell you a product isn’t worth your money (even if we could earn a commission) than damage the trust we’ve built with our community over the years.

How we test web hosting providers

We understand that choosing the right hosting provider can be a daunting task, and we want to make that process easier for our readers. That’s why we developed a comprehensive methodology for testing and reviewing web hosting companies.

Here’s how we do it:

1. We buy each hosting setup on our own

We don’t ever let the hosting company know about our purchases, nor do we share our site addresses with them. We aim to appear just like any other regular customer they have.

Here are some of the hosting companies with which we maintain active web hosting setups, along with their primary server locations:

- Bluehost – Utah, USA 🇺🇸

- DreamHost – California, USA 🇺🇸

- Flywheel – California, USA 🇺🇸

- GoDaddy – Arizona, USA 🇺🇸

- GreenGeeks – Netherlands 🇳🇱

- Hosting.com – Michigan, USA 🇺🇸

- Hostinger – Netherlands 🇳🇱

- HostGator – Utah, USA 🇺🇸

- InMotion Hosting – Virginia, USA 🇺🇸

- Kinsta – South Carolina, USA 🇺🇸

- Namecheap – USA 🇺🇸

- Rocket – East, USA 🇺🇸

- ScalaHosting – Europe 🇪🇺

- SiteGround – Netherlands 🇳🇱

- WP Engine – Oregon, USA 🇺🇸

For the most part, we use standard, entry-level hosting setups with every hosting company. Again, we want to replicate the average customer’s experience as much as possible.

We also do not attempt to configure the setups beyond the default pre-configuration provided by the host itself. This allows us to design our performance tests to be as neutral as possible, giving each host an equal chance, and ensuring our test results reflect the average user’s experience.

These hosting setups are then used for reviews, comparisons, hosting roundups, other research pieces. Essentially, we use them for all content where firsthand experience is important and valuable.

Other important considerations:

- We don’t equalize the server settings. We want to reward hosts that go the extra mile by fine-tuning their default server configuration. Our reasoning is that the average user will likely benefit from these optimizations as long as they don’t require any user input. We’d argue that most users (at least on entry-level plans) won’t adjust many options in their hosting configurations.

- Sorry, but we won’t make the addresses public. We simply don’t want the companies (or anyone else) interfering with them and skewing the results over time.

2. We run an example test site with each host

Our hosting setups don’t just sit idle. We set up simple template sites on each one.

All sites run on WordPress. ⚙️

We make every site look the same:

- 🎨 All sites use the same theme (Neve) and starter design (see below).

- ✍️ All sites have the same test content, which is the stock content of the starter site design.

- 🔌 All sites also have the same set of popular plugins to add extra load. Right now, the stack includes: All-In-One Security, Starter Sites & Templates by Neve, WP Statistics, WPForms Lite, Yoast SEO.

The idea behind this is to emulate a real website as much as possible. We didn’t want to simply use a basic HTML page with 3KB of content on it. In that scenario, every host would probably do a great job loading it in no time.

The homepages of our sites are around 650KB, which, while still not huge, does manage to feature all the common design elements (which you can see in the screenshot above).

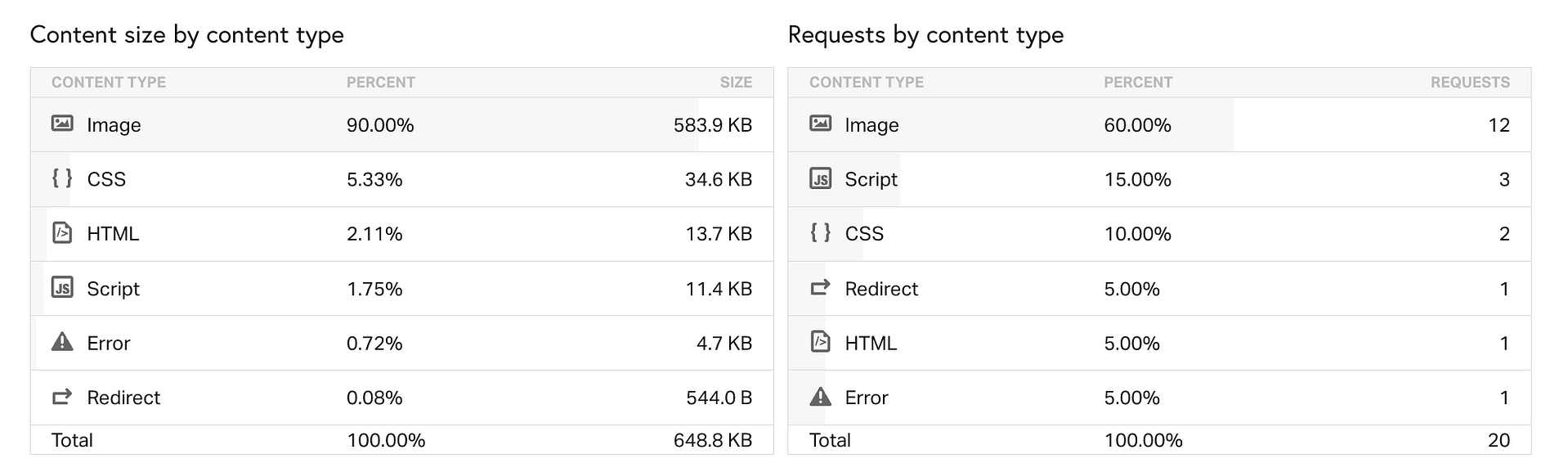

Here’s roughly how the individual resources break down:

We update the entire stack every month – this includes WordPress versions, plugins, and theme.

3. How we set up our tests

We’re primarily interested in each host’s uptime and load times.

Here are some more specifics:

- When tracking site performance, we’re interested in the total load time (“time to fully loaded”) for each of our test setups. Metrics like initial response times or FCP times can be misleading and aren’t truly representative of an actual user’s experience or perceived speed of the site. That’s why we went the distance and decided to measure the time it takes to load the page entirely.

- We test our sites from multiple locations around the globe (6).

- We emulate an actual web connection and user browser. The idea behind it is that we didn’t want to make it into a lab-like, synthetic experiment where every host looks great.

- We do multiple tests (5) per site per location, and then average out the numbers to get the final “typical” load time. This means that each host is tested 30 individual times every month.

The tests run through WebPageTest. We emulate the testing conditions to be: what they call a “native” connection (with no traffic shaping), use the desktop version of Chrome as the end user environment.

We use the following testing locations:

- N. Virginia, USA 🇺🇸

- California, USA 🇺🇸

- Utah, USA 🇺🇸

- London, UK 🇬🇧

- Paris, France 🇫🇷

- Mumbai, India 🇮🇳

For keeping track of uptime, we use UptimeRobot. It monitors uptime 24/7 and records every second of downtime. It also gives us one year of history. We then put the numbers in a separate spreadsheet not to lose any data older than one year.

You can check the uptime for all our test accounts on our hosting status page. If you want to see how a specific hosting provider is performing, just head to its dedicated page.

Server location vs testing location

As you’ve seen above, our hosting setups are located in a handful of different places, and we then test their performance from six more specific locations around the globe. This creates many combinations and impacts our testing results.

Given that the primary server is set in only one specific location, this can have impact on the individual loading times, especially when measured from further away.

Traditionally in web hosting, the further a site is from the user, the longer it will take for that user to see the site. Therefore, some of our load times for any given host can be worse when measured from a location further away from the server.

We believe this is not a bug in our testing methodology, but a feature. We show the realistic experience that a random customer will have with a hosting company. A customer will choose their server to be located in just one place. The loading times to that server from other places can therefore be a bit worse, and this is to be expected. Our goal here is not to make hosting companies look as good as possible, but as real as possible.

Now, I’m using the word “can” there – saying that the results “can” be worse, for example. But in practice, they don’t always have to be. One way that some hosting companies mitigate this is by setting up additional optimizations that come enabled for all user accounts by default so that the site can load faster from all locations. We consider this fair game. If a web host does something that improves the experience for all their users, then that’s great, and it will be reflected in our tests.

4. How we write hosting reviews

There are a couple of important elements to a quality review:

First off, we work with a team of experts who have years of experience in the web hosting industry. They evaluate each hosting company and provide their honest opinions.

We incorporate the results of our performance testing (as described above) into our reviews.

One of the most important factors when choosing a web hosting provider is pricing. We thoroughly review the pricing options of each hosting company we test, including the cost of different plans, any hidden fees, and any available discounts.

The features offered by a hosting provider are also crucial to consider. We evaluate the features provided by each hosting company, including the amount of storage and bandwidth, the number of domains allowed, and the availability of tools like website builders and ecommerce platforms.

In addition to our own research, we also conduct a recurring hosting survey (the only such survey in the industry) on our sister-site WPShout, where we ask real users about their experiences with different hosting providers. We take into account the feedback we receive from our readers and the broader web hosting community to ensure that our reviews are comprehensive and unbiased.

We believe that thorough, honest testing is the foundation of trustworthy recommendations. While no testing process is perfect, we continually refine our methods to provide you with the most reliable information possible to make informed decisions for your WordPress site.

Last but not least, we regularly update our reviews to ensure they reflect the most current information available.

…

Have questions about our testing methodology? Feel free to reach out to us at friends@themeisle.com.

Interested in learning more about our editorial standards? Read our Editorial Policy.